Cover of my School Study, 1971

At the age of eleven, I produced the illustration above for the cover of a “London Study” that we were required to write and illustrate at school. The study was created in connection with our school visit to the capital city, which had taken place in May 1971, just before I drew the cover.

As you may expect (given my interests), my cover drawing emphasized modes of transport. Additionally, I chose as the centerpiece a striking modern building to which we had paid a surprise visit during the trip, and which had substantially impressed me. Little did I know at that time that it would probably be my only opportunity ever to visit that iconic building.

The building in my drawing was the recently-built Post Office Tower (now known as the BT Tower). Even before that first visit to London, I was well aware of the existence of that structure, which was feted as a prime example of Britain’s dedication to the anticipated “White Heat of Technology”. In addition to its role as an elevated mount for microwave antennas, the Tower offered public viewing galleries providing spectacular views over Central London. There was also the famous revolving restaurant, leased to Butlin’s, the famous operator of down-market holiday camps.

The Tower and its restaurant began to feature prominently in the pop culture of the time. An early “starring” role was in the comedy movie Smashing Time, where, during a party in the revolving restaurant, the rotation mechanism supposedly goes out of control, resulting in a power blackout all over London.

In the more mundane reality of 1971, our school class arrived in London and settled into a rather seedy hotel in Russell Square. One evening, our teacher surprised us by announcing an addition to our itinerary. We would be visiting the public viewing galleries of the Post Office Tower, to watch the sun go down over London, and the lights come on! Needless to say, we were thrilled, even though we had no inkling that that would be our only-ever chance to do that.

There were actually several public viewing gallery floors, some of which featured glazing, while others were exposed to the elements, except for metal safety grilles. Fortunately, the weather during the evening that we visited was not exceptionally windy!

Concretopia

I’m currently reading the book Concretopia, by John Grindrod, which provides a fascinating history of Britain’s postwar architectural projects, both public and private.

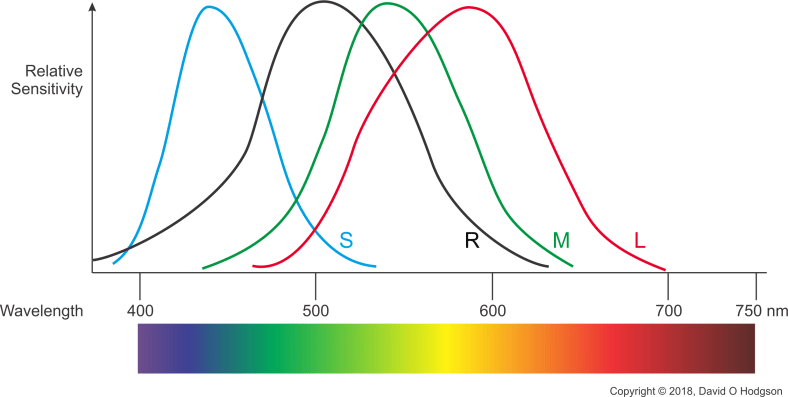

One chapter of the book is dedicated to what was originally called the Museum Radio Tower (referring to the nearby British Museum). It provides detailed descriptions of the decisions that led to the construction of the tower, and reveals that at least one floor is still filled with the original 1960s-era communications technology.

Due to subsequent changes both in communications technology and British government policies regarding state involvement in such industries, much of the original function for which the Tower was built has now been rendered obsolete or moved elsewhere, leaving the building as something of a huge museum piece (ironically, in view of its original name).

The Once-and-Only Visit

In October 1971, a few months after my school class visit, a bomb exploded in the roof of the men’s toilets at the Top of the Tower Restaurant. Initially it was assumed that the IRA was responsible, but in fact the attack was accomplished by an anarchist group.

Fortunately, nobody was hurt in the incident, but it drew attention to the security vulnerabilities created by allowing public access to the Tower. The result was that the public viewing galleries were immediately closed down, never to be reopened, and Butlins’ Top of the Tower restaurant was informed that its lease would not be renewed after that expired in 1980.

Nonetheless, the Tower continued to appear in the media as an instantly recognizable icon. At around the same time, it was supposedly attacked by a particularly unlikely monster—Kitten Kong [link plays video]—in the British TV comedy series The Goodies.

My younger brother took the same school trip to London two years after me, but it was already too late; the Tower’s public viewing galleries were closed, so he never got to see the London twilight from that unique vantage point.

The Unexpected Technologist

On that first visit to London in 1971, I had no notion that I personally would ever be a participant in the kind of exciting technological innovation signified by the Tower. In my family’s view, such advances were just something that “people like us” observed and marveled at, from a remote state of consumer ignorance.

I never anticipated, therefore, that I would return to London as an adult only ten years later, to begin my Electronics degree studies at Imperial College, University of London. I had to visit the University’s administration buildings in Bloomsbury to obtain my ID and other information, and there was that familiar building again, still looming over the area. (The University Senate House is also famous for its architectural style, but I’ll discuss that in a future post!)

My 1982 photo below, taken during my undergraduate days, offers an ancient-and-modern architectural contrast, showing the top of the Tower from a point near the Church of Christ the King, Bloomsbury.

Post Office Tower & Bloomsbury, 1982

The Museum Tower

The photo below shows the Tower again, during a visit in 2010, now with its “BT” logo prominently on display. Externally, the tower looks little different from its appearance as built, and, given that it’s now a “listed building”, that is unlikely to change much in future.

BT Tower, 2010

For me, the Post Office Tower stands as a memorial to the optimistic aspirations of Britain’s forays into the “White Heat of Technology”. It seems that, unfortunately, the country’s “Natural Luddites” (which C P Snow claimed were dominant in the social and political elite) won the day after all.

Cover of my School Study, 1971